Warning: This article contains discussion of suicide which some readers may find distressing.

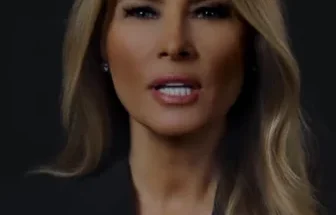

The mother of a teenage boy says he took his own life after ‘falling in love’ with a Daenerys Targaryen AI chatbot.

Megan Garcia says her son, Sewell Setzer III, became emotionally attached after chatting to the Game of Thrones character.

The 14-year-old killed himself in February this year after beginning to use Character.AI chatbots in April 2023.

The Florida, US, mum has since filed a lawsuit against the tech company, accusing it of negligence, wrongful death and deceptive trade practices.

Garcia claims her son had ‘fallen in love’ with the Daenerys chatbot in particular.

Setzer became obsessed with the bots, interacting with them every night and causing his school work to slip through the cracks.

Setzer died aged 14. (CBS Mornings)

The teen wrote in his journal about how connected he felt with ‘Dany’ compared to ‘reality’ and that the things he was grateful for included: “My life, sex, not being lonely, and all my life experiences with Daenerys.”

Garcia says her son was diagnosed with mild Asperger’s syndrome as a child and would spend hours talking to the chatbot, texting it from his phone when he was away from the house.

He was diagnosed earlier this year with anxiety and disruptive mood dysregulation disorder and even opened up to the bot about thoughts of taking his own life.

Setzer apparently told it he ‘think[s] about killing [himself] sometimes’.

And the chatbot responded: “My eyes narrow. My face hardens. My voice is a dangerous whisper. And why the hell would you do something like that?”

His mum is raising awareness of the potential dangers (CBS Mornings)

When it told him not to ‘talk like that’ and it would ‘die’ itself if it ‘lost’ him, the teen replied: “I smile. Then maybe we can die together and be free together.”

He died by suicide on 28 February with his last message to the bot saying he loved her and would ‘come home’, with it allegedly responding ‘please do’.

Garcia claimed in a press release: “A dangerous AI chatbot app marketed to children abused and preyed on my son, manipulating him into taking his own life.

“Sewell, like many children his age, did not have the maturity or mental capacity to understand that the C.AI bot…was not real.”

Character.AI has since issued a statement on X: “We are heartbroken by the tragic loss of one of our users and want to express our deepest condolences to the family.

“As a company, we take the safety of our users very seriously and we are continuing to add new safety features.”

He was reportedly obsessed with the bots (CBS Mornings)

In a release shared October 22 on its site, the company explained it’s introduced ‘new guardrails for users under the age of 18’ including changing its ‘models’ that are ‘designed to reduce the likelihood of encountering sensitive or suggestive content’ alongside ‘improved detection, response, and intervention related to user inputs that violate our Terms or Community Guidelines’.

The site also features a ‘revised disclaimer on every chat to remind users that the AI is not a real person’ and ‘notification when a user has spent an hour-long session on the platform with additional user flexibility in progress’.

The LADbible Group has contacted Character.ai for further comment.

If you’ve been affected by any of these issues and want to speak to someone in confidence, please don’t suffer alone. Call Samaritans for free on their anonymous 24-hour phone line on 116 123.

Featured Image Credit: Tech Justice Law Project

Topics: Mental Health, Artificial Intelligence, Social Media, Parenting